Paper

NeuWigs: A Neural Dynamic Model for Volumetric Hair Capture and Animation

|

|

|

|

|

|

|

|

|

|

|

|

|

|

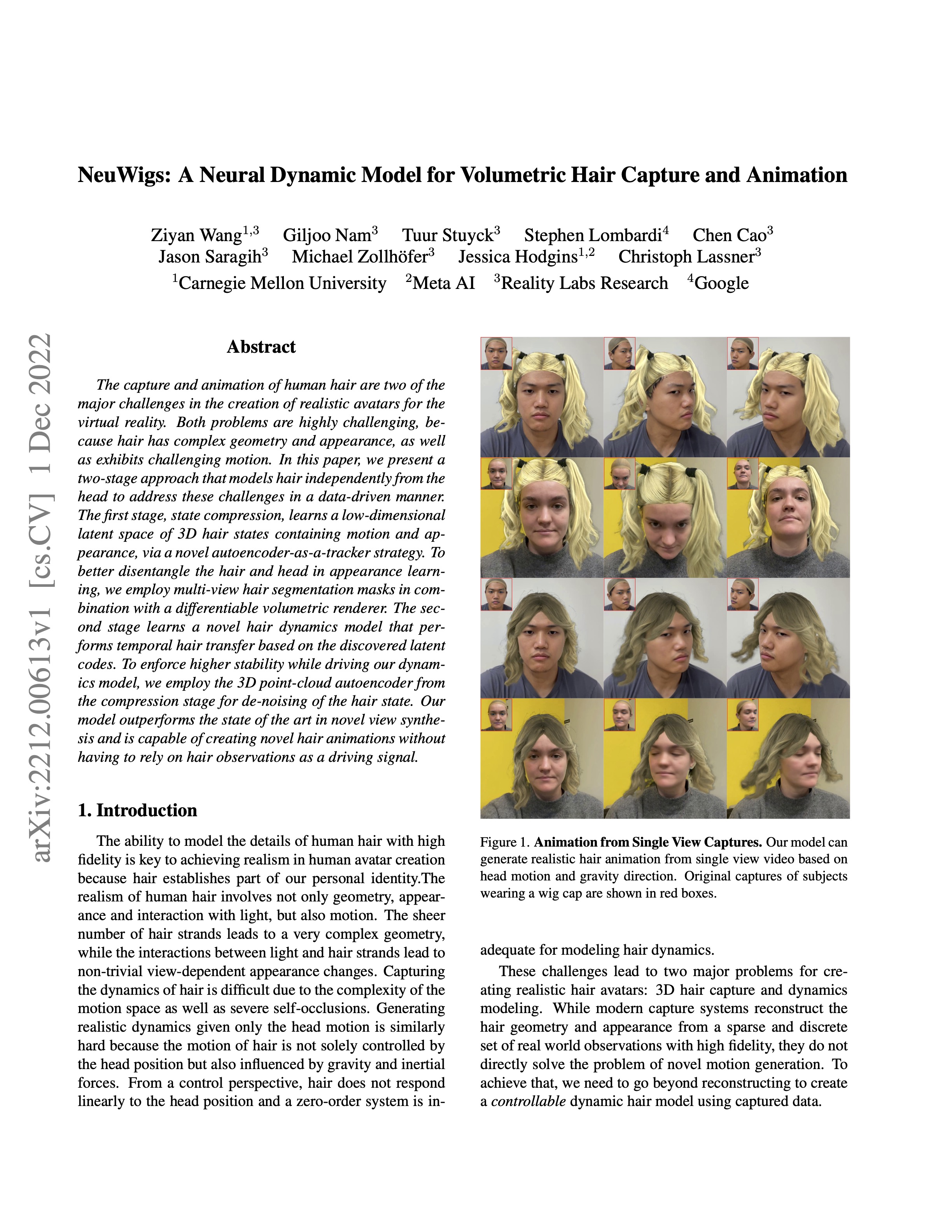

| NeuWigs presents a data-driven hair dynamics model that can rollout hair animation. It takes an initial hair state as input and propagates it into possible future states based on the head motion and gravity direction. Here we show results on single view videos captured by a smart phone where the animation is driven by the head motion in the video. |

|

The capture and animation of human hair are two of the major challenges in the creation of realistic avatars for the virtual reality. Both problems are highly challenging, because hair has complex geometry and appearance, as well as exhibits challenging motion. In this paper, we present a two-stage approach that models hair independently from the head to address these challenges in a data-driven manner. The first stage, state compression, learns a low-dimensional latent space of 3D hair states containing motion and appearance, via a novel autoencoder-as-a-tracker strategy. To better disentangle the hair and head in appearance learning, we employ multi-view hair segmentation masks in combination with a differentiable volumetric renderer. The second stage learns a novel hair dynamics model that performs temporal hair transfer based on the discovered latent codes. To enforce higher stability while driving our dynamics model, we employ the 3D point-cloud autoencoder from the compression stage for de-noising of the hair state. Our model outperforms the state of the art in novel view synthesis and is capable of creating novel hair animations without having to rely on hair observations as a driving signal. |

|

Z. Wang, et al. NeuWigs: A Neural Dynamic Model for Volumetric Hair Capture and Animation |

| These are results of hair animation on single-view phone captured sequences with depth. Here we take a nodding and a swinging sequence as examples. We first perform keypoint extraction and head mesh tracking on the single-view phone captured video, which are shown in the first two columns. To achieve smooth in-the-wild face tracking and resolve scale ambiguity, we use depth as an additional supervision for the head mesh tracking. Then, with the head motion information, we propagate the static hair into future configurations, which is shown in the last column. |

| We show animation results on multiview capture from a lightstage. From left to right, the three columns represent the bald head capture from a side camera, animation overlaying hair from the frontal camera, animation overlaying hair from the side camera. |

| We show animation results of our dynamic model on a range of motions. The hair animation is generated by evolving the initial hair state conditioned on head motion and gravity direction. |